Over the past year we have witnessed a major shift in the field of graphic design, driven by the development of applications that use “deep learning” [1] to generate images and illustrations based on textual input. The best-known apps in this category include DALL-E 2 and Midjourney, which we have recently used to design several images for posts, as well as Stable Diffusion, which is freely available. We will discuss the technology behind these programs.

Advertisement

The new applications include an image generator. It generates a random picture from an initial image that is composed of randomly selected pixels (“noise”), as well as a component that uses the text you enter to influence the content of the image. This method described below is known as a “diffusion model” and it is used in drawing apps such as Stable Diffusion. An excellent explanation can be found in video [2] of the Computerphile series.

We will explain how the app works by comparing it to the process of creating a sculpture. Imagine a skilled, well-trained sculptor is given an unhewn block of stone (like an image made up of random pixels), and he begins to chisel it. Uncertain what to create, so he cuts away a few protrusions that will probably not be part of the final sculpture. After a while, he stops to look at what he has made so far. He notices that the stone he has started to sculpt resembles many different types of sculptures he is familiar with from his extensive experience. He randomly chooses a direction that could lead to various possibilities, and starts chiselling again. After some time, he pauses again and realizes that the stone could take the shape of a more specific type of sculpture. He randomly selects a direction, chisels a little, takes a look, and repeats this process over and over again. Eventually, the statue takes shape, becoming similar to, but not identical to, one of the sculptures stored in his memory. This gradual creation process, involving random choices at every stage, produces a random yet high-quality result.

And there is another important addition: imagine that, at each stage of the construction process, a disembodied voice whispers an instruction to the sculptor, such as “Make us an angel statue” (similar to selecting an image theme by entering text). The sculptor then follows the same process, but at every stage he only considers angel statues, gradually tuning himself towards creating a random angel statue.

Similarly, in an image-generation app that uses a neural network for “deep learning” [1], the network starts with a picture made of random pixels (“an unhewn block of stone” in our analogy) and by gradually changing the pixels, produces a high-quality image defined by text. To achieve this, the network must first be trained using real images. The Stable Diffusion software has a database of 2.3 billion images for this purpose. During training, images are taken from the database and a random positive or negative value is added to each pixel, representing normally distributed noise (“Gaussian noise”), producing noisy images. The aim is to teach the network to locate and identify the added noise so that the original image can be recovered by subtracting the noise component from the noisy image.

In our analogy, an instructor gives a novice sculptor a block of stone containing the beginnings of a well-known statue, but with extra layers of stone (“noise”) on top. The novice must then learn how to proceed. As the famous saying by Michelangelo's goes, “The statue is already in the stone, and the sculptor’s task is to remove the excess stone.” The developers found that it is sometimes easier to first estimate the noise component and then find a suitable image. For example, if a noisy image contains a black area with a single white pixel, this pixel is likely to be noise that should be removed.

The learning algorithm is executed in many small steps, around a thousand for example. For each image in the database, we start with a clean image, adding random noise at each step. We then inform the network of the total amount of noise (in mathematical terms, the mean and variance of the Gaussian distribution), and train it to produce an image that is closer to the original. In the early stages, when the noise level is low, the network performs well. However, in the later stages, when the images are mostly noise, its performance drops and it struggles. Yet the process works! How?

Having described the training process, we will now describe the inference phase. Let’s create an image of random pixels and feed it to the network. We inform the network that we have reached the final step, and provide the corresponding noise level. The network will do its best, as it was trained, to estimate the noise and thereby generate an approximation of the original image (which never actually existed…). This estimate is quite poor, but it is presumably “smoother”, and this is only the beginning. Next, we add Gaussian noise to the estimated image at the intensity corresponding to the penultimate step, before feeding it back to the network. The network will use its experience to estimate the noise for this step and will create a slightly less noisy image. As this process continues, the noise level decreases, until, miraculously, we obtain a random image based on one of those in the database.

This sounds lovely, but what's more important, and more complex, is the ability to integrate text into this process. As mentioned, users can direct the creation of the image by writing a sentence. First, the software interprets the semantic meaning of the sentence. The algorithms currently used for this purpose are called Transformers. We wrote about such an algorithm called BERT[3], and we are all familiar with ChatGPT. The result of this stage is a numerical representation of the sentence’s semantic meaning. Each image in the software’s vast database, which was created by collecting images from the web, is also accompanied by a text description. Therefore, the network can direct the image creation process towards images that match the text we wrote. At each stage of creation, the application instructs the network: “Clean the noise so that the image matches the set of images relevant to the text.” Since the process consists of many stages, the network gradually synchronizes and uses only the desired images. The final product is an image composed of a random combination of pictures related to the text. Users can run the application multiple times with the same text and receive different images, choosing the one that looks best.

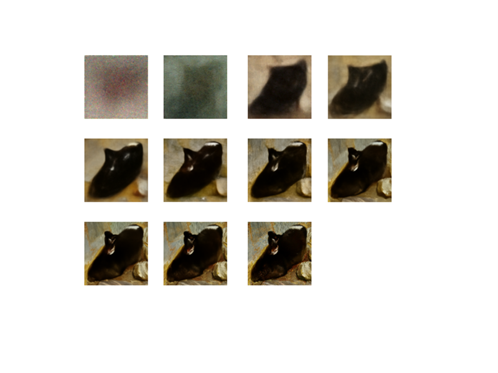

Since they appeared, these applications have generated enormous media buzz, attracting plenty of enthusiasts and detractors alike. We asked Miri, an artist and designer who recently created several images for our posts using Midjourney, how she thinks artists can add a personal touch. She said that she likes to guide the application by using an advanced option called “image to image”. She feeds the application a sketch or a photograph that she has created herself. For the illustration she created for this post, Miri used a quick sketch she had prepared. Once she was happy with the result, she redrew it in her own illustrative style. Compare the featured image of this post with the image produced by an application:

Image design: Miri Orenstein with the assistance of the Midjourney AI software

Hebrew editing: Smadar Raban

English editing: Gloria Volohonsky

References: